non-distributional/lexicons/antonyms.txt at master · mfaruqui/non-distributional · GitHub

Por um escritor misterioso

Descrição

Non-distributional linguistic word vector representations. - non-distributional/lexicons/antonyms.txt at master · mfaruqui/non-distributional

GitHub - alexpashevich/E.T.: Episodic Transformer (E.T.) is a novel attention-based architecture for vision-and-language navigation. E.T. is based on a multimodal transformer that encodes language inputs and the full episode history of visual

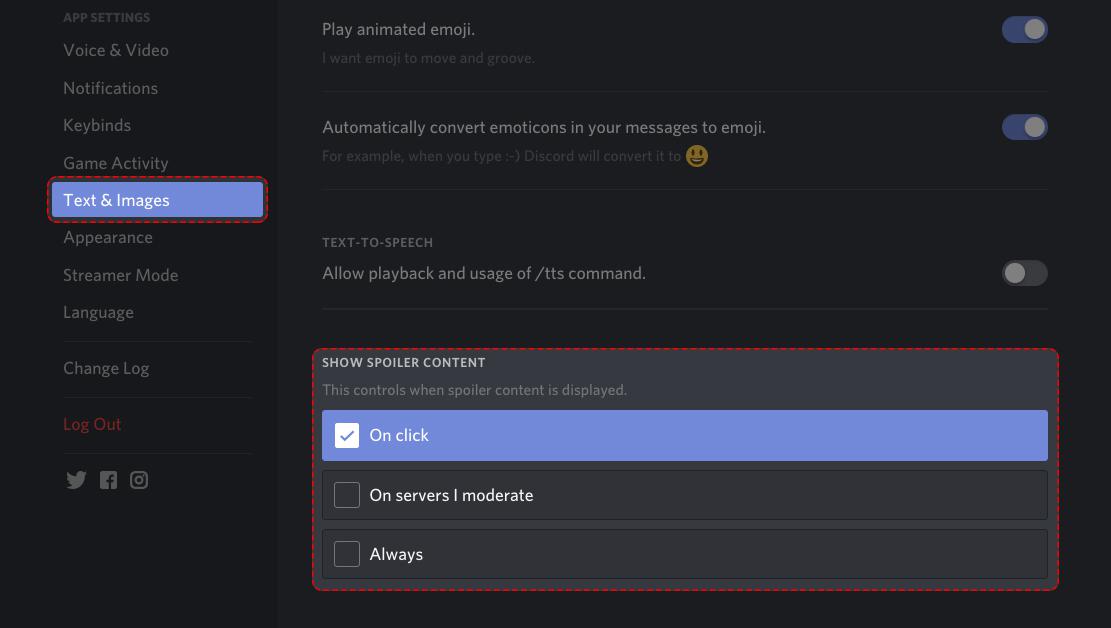

iOS Ionic App: All PDF Files not showing (SintaxError: Unexpected Token '.') · Issue #657 · stephanrauh/ngx-extended-pdf-viewer · GitHub

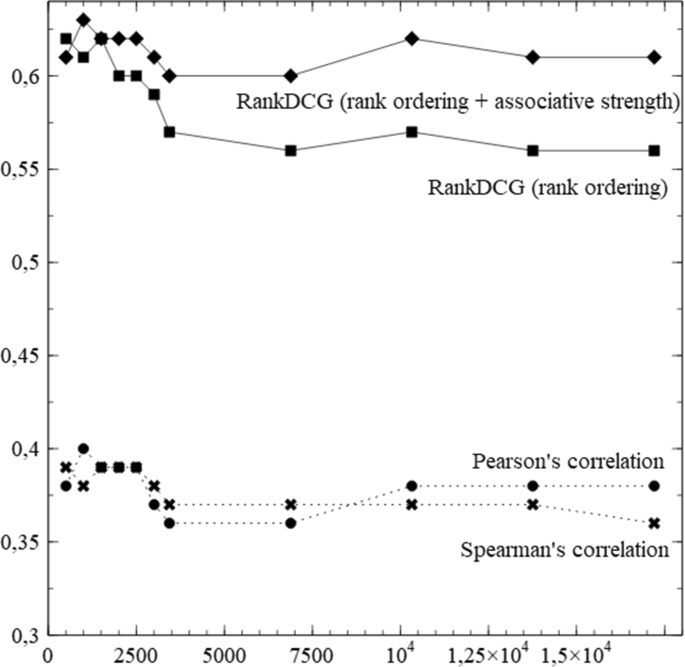

Measuring associational thinking through word embeddings

How does word2vec deal with multi-sense words such as 'sense' and multi-word fragments such as 'common sense'? Are these not major limitations to the embedding approach to NLP in general? - Quora

SecLists/Discovery/Web-Content/CGI-HTTP-POST.fuzz.txt at master · danielmiessler/SecLists · GitHub

PDF) Unsupervised learning of cross-modal mappings between speech and text

PDF) Semantic Similarity from Natural Language and Ontology Analysis

Analogical inference from distributional structure: What recurrent neural networks can tell us about word learning - ScienceDirect

PDF) Evaluating Word Embedding Models: Methods and Experimental Results

de

por adulto (o preço varia de acordo com o tamanho do grupo)