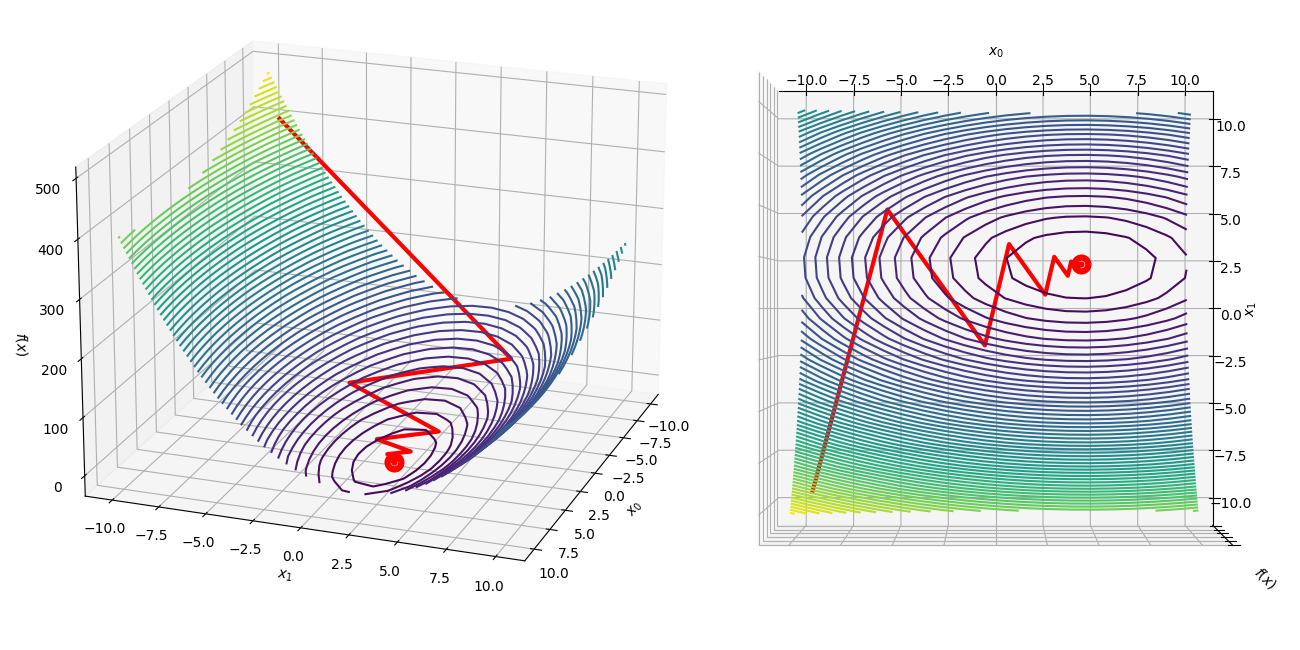

Visualizing the gradient descent method

Por um escritor misterioso

Descrição

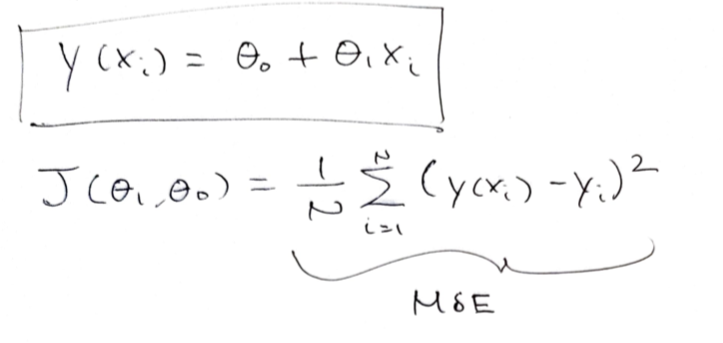

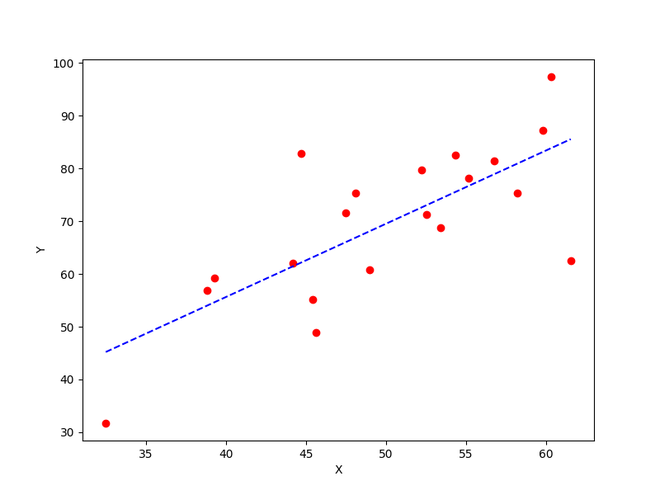

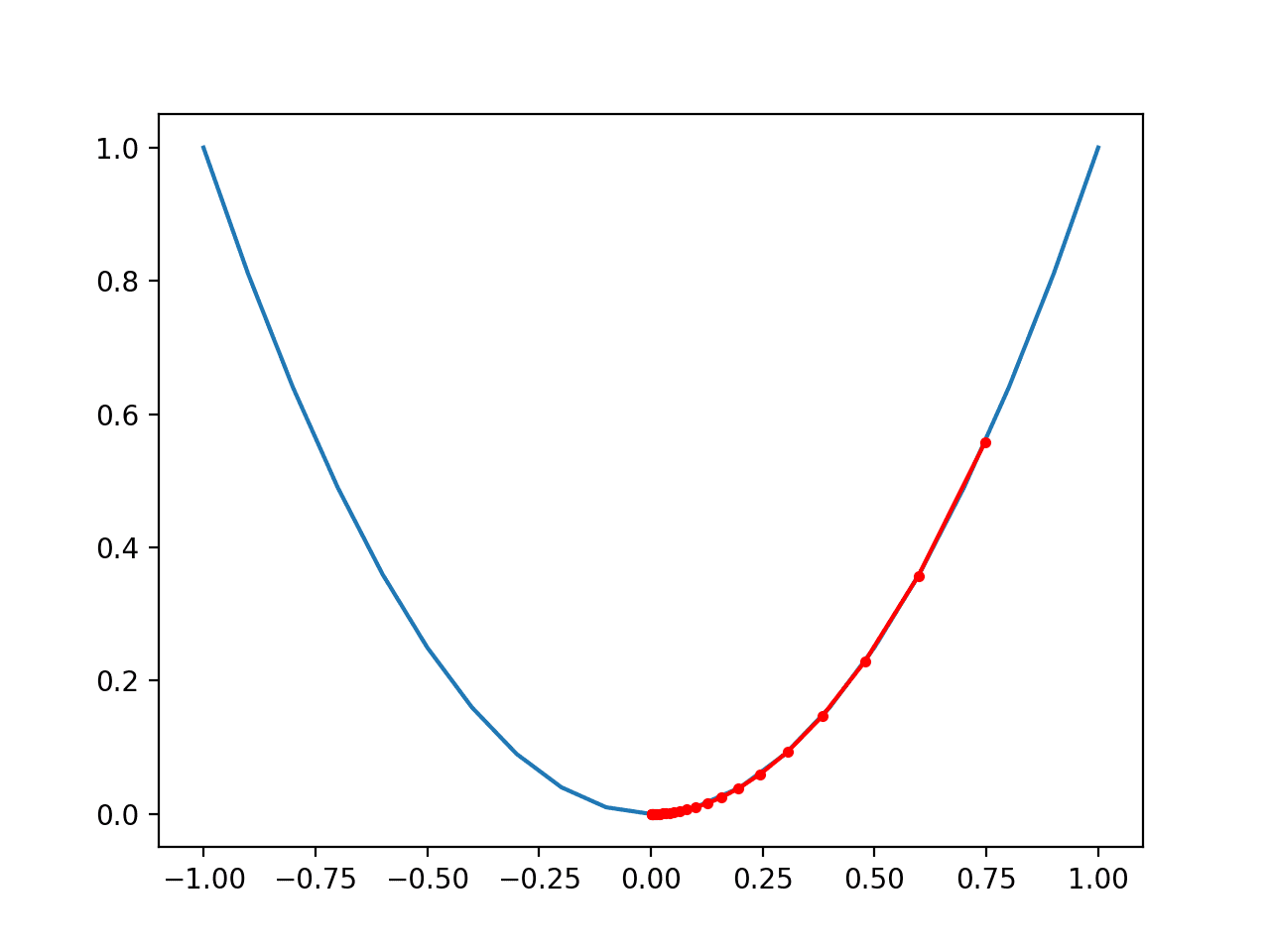

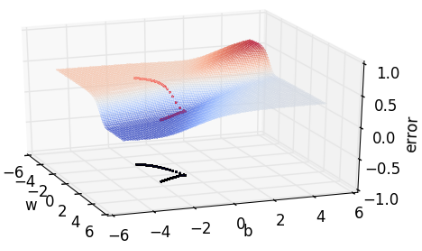

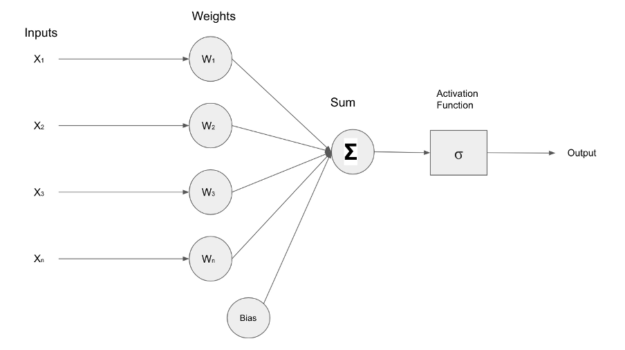

In the gradient descent method of optimization, a hypothesis function, $h_\boldsymbol{\theta}(x)$, is fitted to a data set, $(x^{(i)}, y^{(i)})$ ($i=1,2,\cdots,m$) by minimizing an associated cost function, $J(\boldsymbol{\theta})$ in terms of the parameters $\boldsymbol\theta = \theta_0, \theta_1, \cdots$. The cost function describes how closely the hypothesis fits the data for a given choice of $\boldsymbol \theta$.

A Visual Explanation of Gradient Descent Methods (Momentum, AdaGrad, RMSProp, Adam), by Lili Jiang

From Mystery to Mastery: How Gradient Descent is Reshaping Our World

Visualizing the gradient descent in R · Snow of London

GMU Math MakerLab: A Visualization of Gradient Descent

How to visualize Gradient Descent using Contour plot in Python

Gradient-Based Optimizers in Deep Learning - Analytics Vidhya

Visualizing the Gradient Descent Algorithm.

Gradient descent visualization - hills

How to visualize Gradient Descent using Contour plot in Python

Projected gradient descent and projected Newton's method for quadratic

Gradient Descent in Machine Learning: What & How Does It Work

How to implement a gradient descent in Python to find a local minimum ? - GeeksforGeeks

Gradient Descent With Momentum from Scratch

Why Visualize Gradient Descent Optimization Algorithms ?, by ASHISH RANA

A Data Scientist's Guide to Gradient Descent and Backpropagation Algorithms

de

por adulto (o preço varia de acordo com o tamanho do grupo)