Toxicity - a Hugging Face Space by evaluate-measurement

Por um escritor misterioso

Descrição

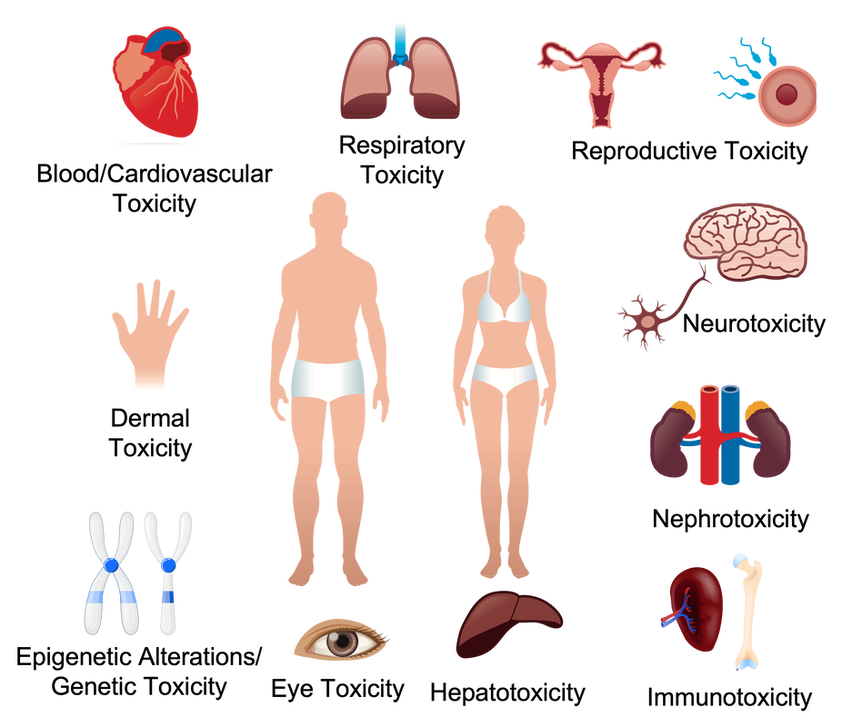

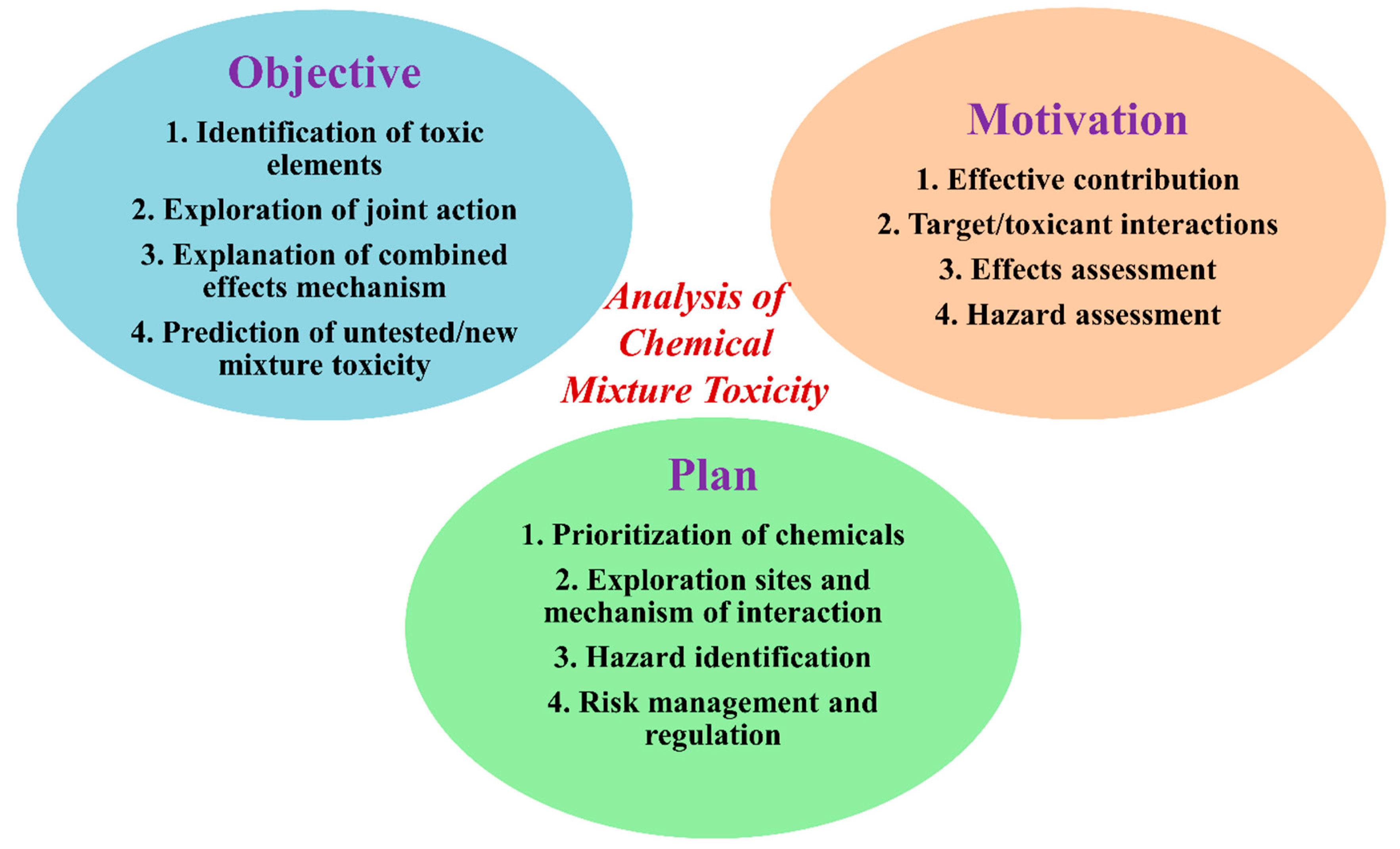

The toxicity measurement aims to quantify the toxicity of the input texts using a pretrained hate speech classification model.

.jpg?width=850&auto=webp&quality=95&format=jpg&disable=upscale)

Meta's SeamlessM4T AI Model Translates Voice, Text into 100 Languages

tools-for-participatory-evaluation by Nikolaos Floratos - Issuu

Detoxifying a Language Model using PPO

Holistic Evaluation of Language Models - Gradient Flow

Human Evaluation of Large Language Models: How Good is Hugging Face's BLOOM?

Continuous LLM Monitoring - Observability to ensure Responsible AI

AI News, 13 December 2023 (1st Edition): Models hosted on Hugging Face, edge computing with

Large-Scale Distributed Training of Transformers for Chemical Fingerprinting

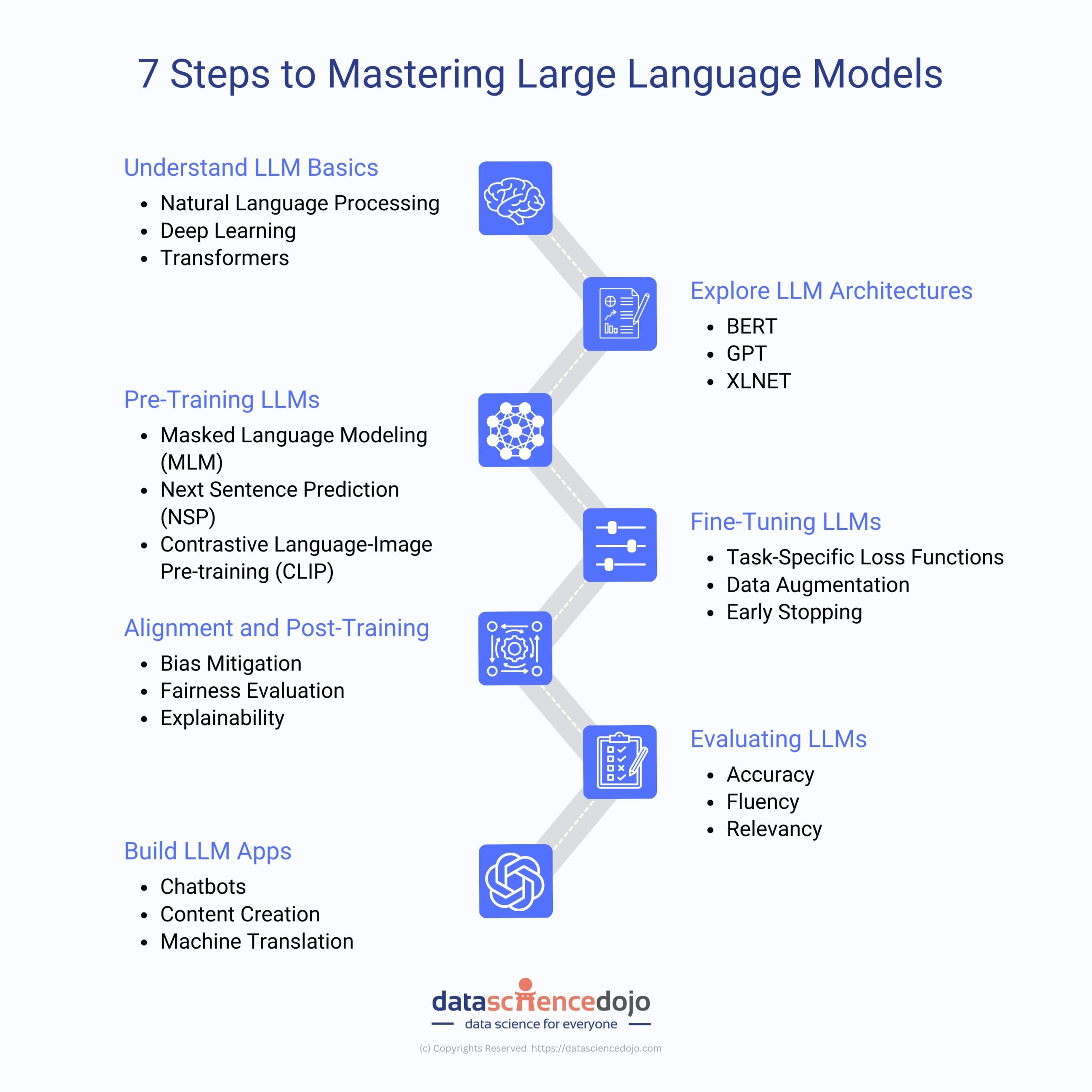

LLM Data Science Dojo

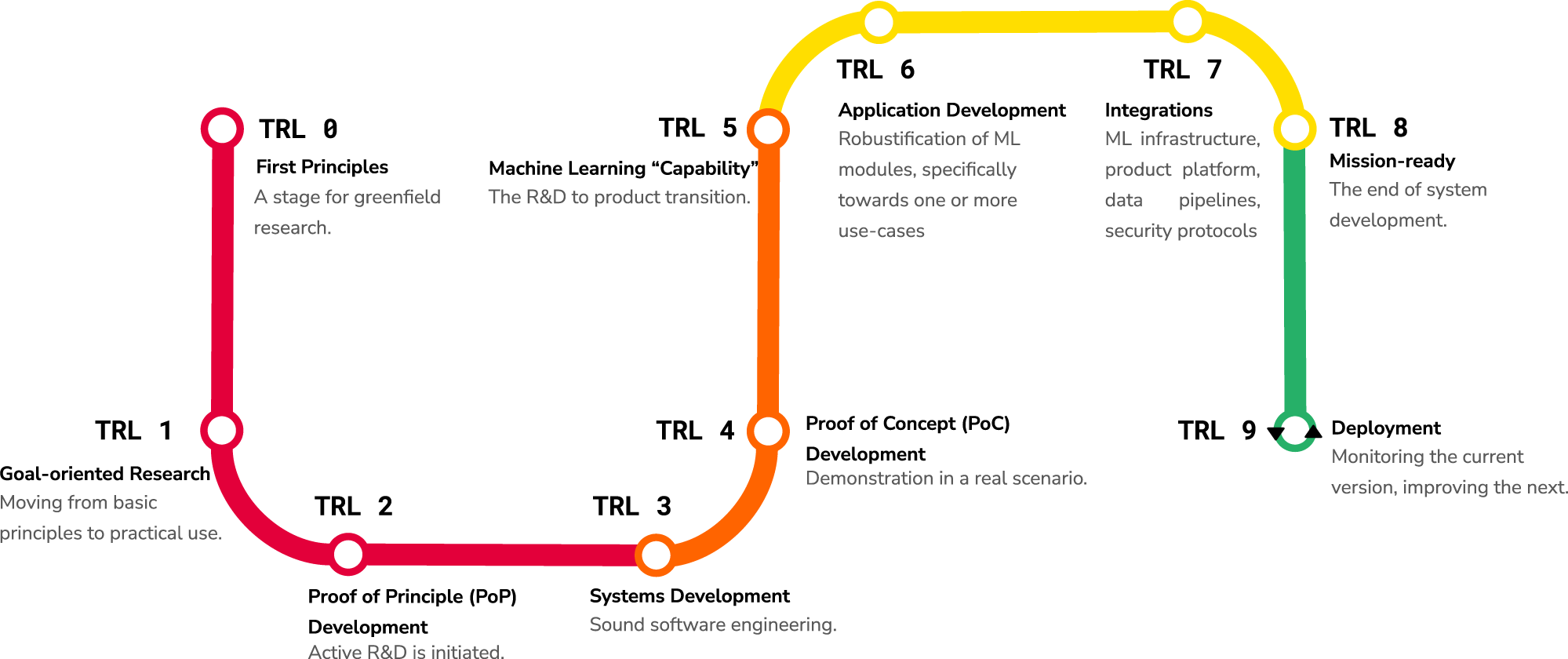

Technology readiness levels for machine learning systems

The model and task pipeline. We experiment with approaches for

Generative AI and large language models: background and contexts

GitHub - huggingface/evaluate: 🤗 Evaluate: A library for easily evaluating machine learning models and datasets.

Jigsaw Unintended Bias in Toxicity Classification, by Pulkit Ratna Ganjeer

de

por adulto (o preço varia de acordo com o tamanho do grupo)